The Face of Satisfaction in Human Ai Teaming

Project Overview

Human satisfaction in collaboration isn’t just about completing a task—it’s about interaction quality, emotional engagement, and social connection. This research explored how robotic behavior affects human satisfaction in a structured cooperative task.

Research Approach

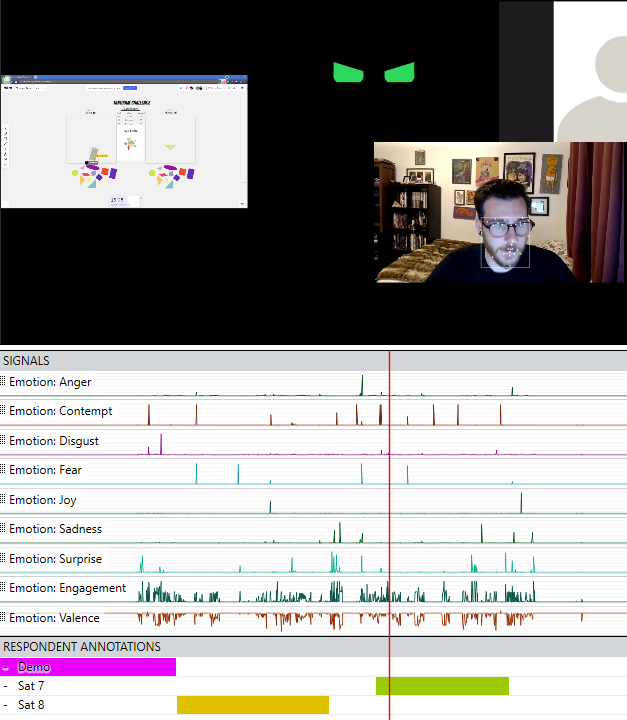

We developed an online collaborative task using a Miro virtual whiteboard, where participants taught a robotic agent how to assemble tangram puzzles. This setup allowed us to manipulate and measure satisfaction in real-time through both implicit (facial expression, vocal tone) and explicit (survey responses) feedback.

Our iterative process was central to refining both the task and measurement methods. We initially tested various collaborative games, from Minecraft-based teamwork to creative guessing games like Pictionary. Through successive iterations, we identified key design factors necessary for effectively manipulating satisfaction while maintaining engagement, ultimately leading to the final structured tangram assembly task.

Iterative Design & Key Insights

Task Selection: Early prototypes using open-ended or highly skilled tasks (e.g., Minecraft, drawing games) led to variability in human response, making it difficult to control experimental conditions. We transitioned to a structured, turn-based task to enable more consistent data collection.

Behavioral Manipulation: Instead of simply varying task success, we modulated the robot’s relational behaviors, such as affective facial expressions and response sounds. This proved more effective in influencing satisfaction than performance alone.

Engagement & Investment: Participants were more engaged when they felt invested in the robot’s learning process. The leaderboard incentive and structured correction phases encouraged active participation and feedback.

Research Findings

Affect Matters More Than Accuracy: Participants rated the robot higher when it displayed positive affect, even when making errors, compared to a neutral or negatively expressive robot.

Predictable Mistakes Improve Satisfaction: Low-stakes errors, followed by correction, led to increased participant investment in the task and higher overall satisfaction, aligning with findings in organizational psychology on low-conflict collaboration.

Self-Report vs. Behavioral Data: Traditional survey measures alone failed to capture moment-to-moment emotional shifts. Facial expression analysis showed stronger variance in real-time responses, suggesting a need for multimodal satisfaction measurement.

Next Steps

Future research will focus on real-world applications, including in-person human-robot collaborations and testing with implemented Ai agents. We also aim to refine satisfaction models by integrating voice and behavioral tracking into adaptive robotic responses.

This project demonstrates that understanding human satisfaction in human-agent teaming requires more than measuring task success—it involves designing interactions that account for emotional engagement and perceived relationship quality. By iterating through different methodologies, we’ve taken steps toward more nuanced, real-time assessment of satisfaction in human-robot teams.

Participants worked with the ``Collaborator Bot'' Ai/robotic agent to complete tangram puzzles faster than fictitious high-scores on a Miro virtual whiteboard.

Collaborator-Bot Ai affect faces from left to right: Neutral, Negative, Positive. In both negative and positive conditions the robot switched to the blue neutral rounded square eye cycle when watching the participants' move. In both conditions, the robot's color changed to green when it was making/correcting its move and yellow when it was receiving feedback.

Robot faces and animated behavior gifs designed by: Louis Riddick

Participant data was analyzed with the iMotions Affdex model. Each stream represents the certainty that a given emotion is being shown at any given frame.